Meta-learning richer priors for VAEs

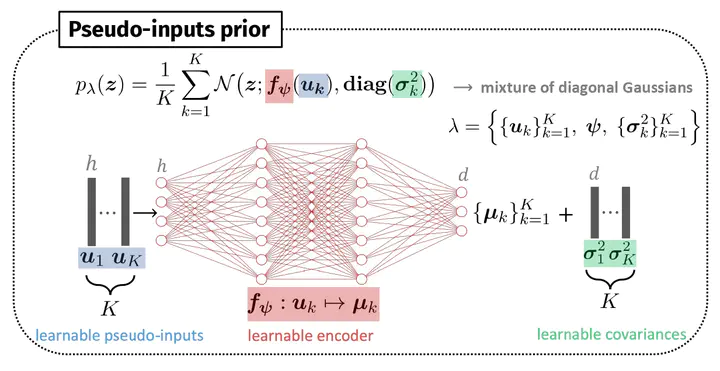

Pseudo-inputs prior

Pseudo-inputs prior

3 I developed my MSc thesis at the department of Biomedical Informatics (ETH) supervised by Gunnar Rätsch. In my project I studied on image datasets how meta-learning can be beneficial to variational auto-encoders and I proposed two priors that allow to achieve better performances. Specifically, I showed that the two priors lead to better bounds for VAEs and to richer latent representations, even more so when the model is trained in meta-learning fashion with the MAML algorithm. In addition, meta-learning allows to achieve higher accuracy in unsupervised few-shots classification, evaluated as a downstream task of prototypical networks. Lastly, I studied the behaviour of the proposed priors in transfer and continual learning settings. This experience has been particularly rewarding as the paper I wrote from my thesis has been accepted at the Advances in Approximate Bayesian Inference (AABI) 2022 symposium.